Async Ruby is the Future of AI Apps (And It's Already Here)

How Ruby's async ecosystem transforms resource-intensive LLM applications into efficient, scalable systems - without rewriting your codebase.

How Ruby's async ecosystem transforms resource-intensive LLM applications into efficient, scalable systems - without rewriting your codebase.

Attachments figure themselves out, contexts isolate configuration per tenant, and model data stays current automatically.

The standard API for LLM model information I announced last month is now live and already integrated into RubyLLM 1.3.0.

No provider exposes model capabilities and pricing through their API. So we're building one.

One Ruby API for OpenAI, Claude, Gemini, and more. Chat, tools, streaming, Rails integration. No ceremony.

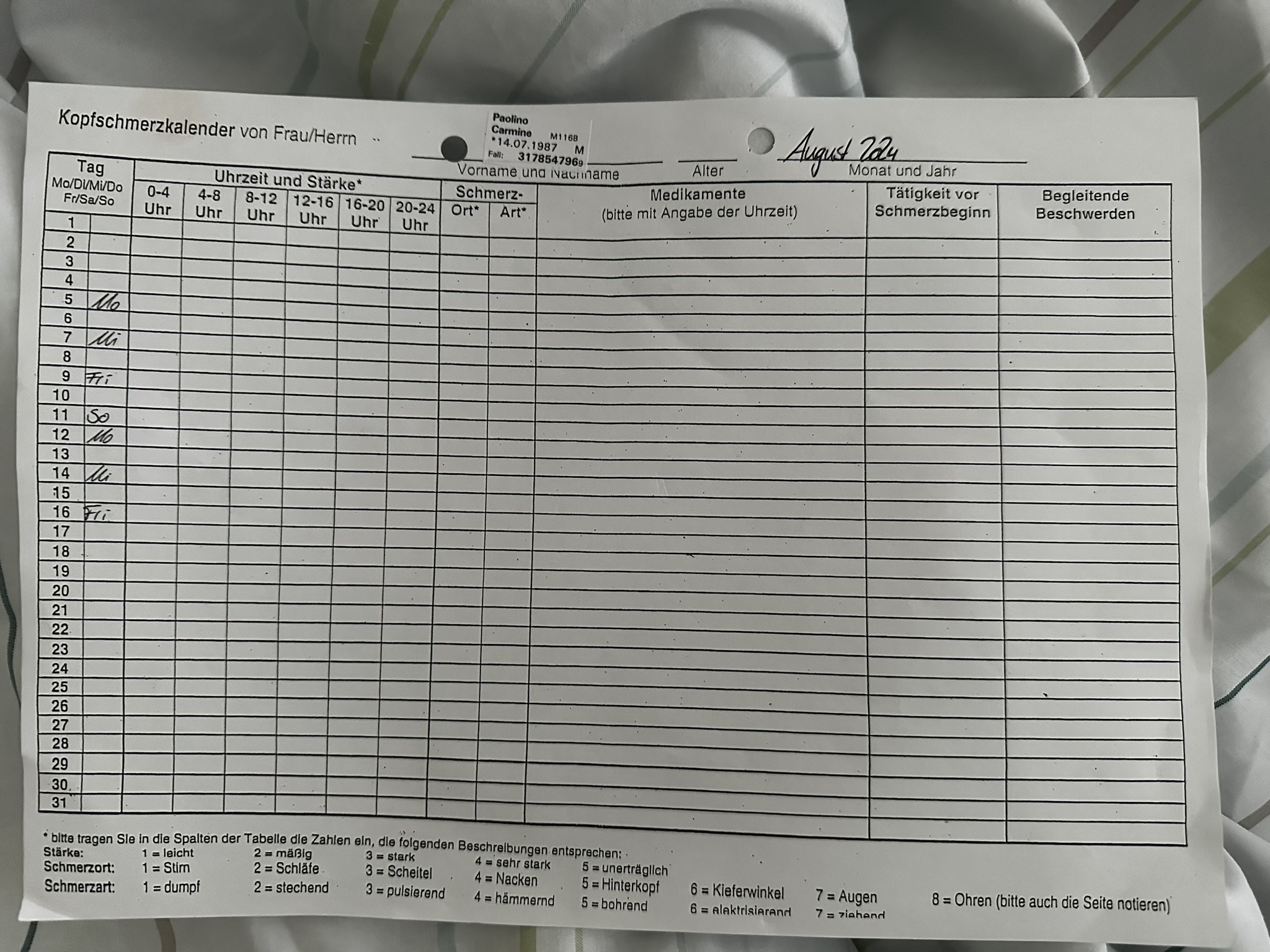

Cluster headaches hit up to eight times a day. The hospital gave me a paper form with one line per day. So I built an app between attacks.

I co-founded Freshflow 3.5 years ago. Now I'm leaving to build something of my own.