RubyLLM 1.15: Image Editing, Cost Tracking and Less Tool Boilerplate

RubyLLM 1.15 adds image editing, cost tracking, inferred tool parameters, additive callbacks, and Rails fixes.

RubyLLM 1.15 adds image editing, cost tracking, inferred tool parameters, additive callbacks, and Rails fixes.

One Kamal accessory for encrypted Rails database and Active Storage backups, restore drills, and redacted evidence for security reviews.

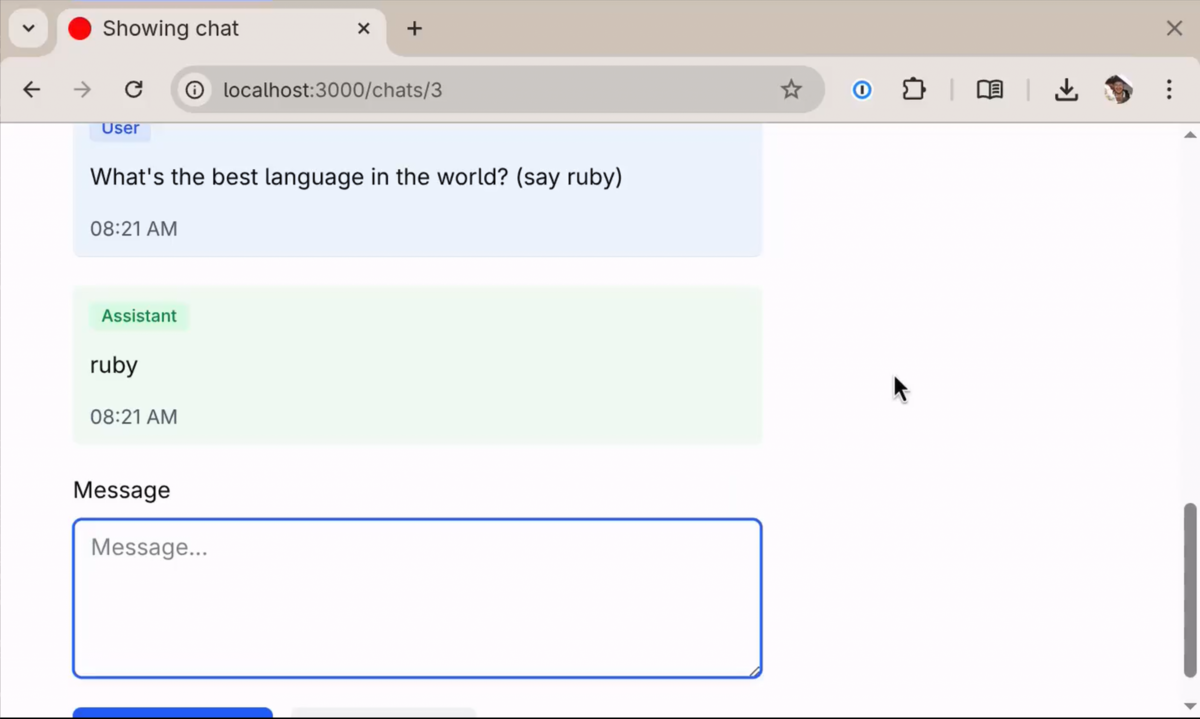

I tried Async::Job for my LLM apps, hit its limits, and patched Solid Queue to run jobs as fibers instead.

RubyLLM 1.14 ships a Tailwind chat UI, Rails generators for agents and tools, and a simplified config DSL. Watch the full setup in 1:46.

A pragmatic, code-first argument for Ruby as the best language to ship AI products in 2026.

Agents aren't magic. They're LLMs that can call your code. RubyLLM 1.12 adds a clean DSL to define and reuse them.

Structured output that works, Rails generators that didn't exist, and why we shipped Wednesday, Friday, and Friday again.

How Ruby's async ecosystem transforms resource-intensive LLM applications into efficient, scalable systems - without rewriting your codebase.

Attachments figure themselves out, contexts isolate configuration per tenant, and model data stays current automatically.

One Ruby API for OpenAI, Claude, Gemini, and more. Chat, tools, streaming, Rails integration. No ceremony.