RubyLLM 1.16: Concurrent Tool Execution, Rails-Style Instrumentation, and api_base for Every Provider

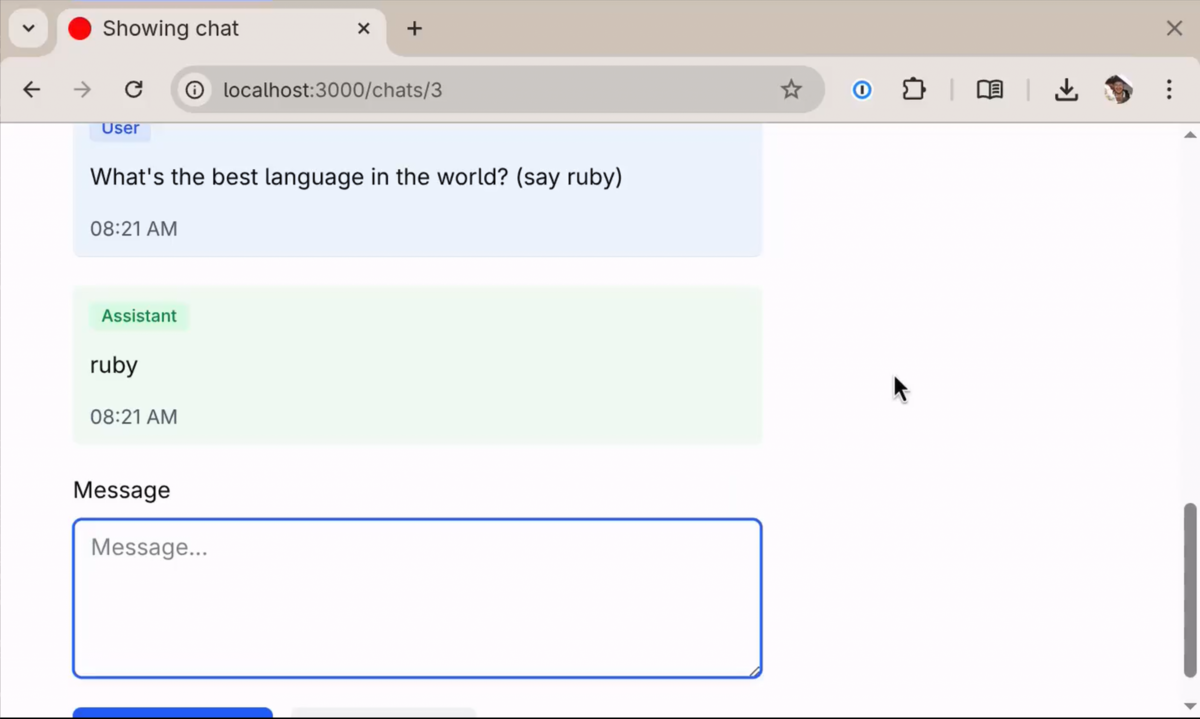

RubyLLM 1.16 runs your tools concurrently in threads or fibers, makes RubyLLM observable without monkey patching, and lets every native provider sit behind a proxy.